Back in March (and that feels like a lifetime ago), when OpenAI announced the release of GPT-4, one of the most impressive and intriguing demos were the new multi-modal capabilities for ChatGPT. Multimodal meant that you would be able to interact with the chatbot in modalities other than text, such as images and audio. (Eventually we might be able to get to the video as well.)

The arrival of GPT-4, and the announcement of multimodal chat barely four months after ChatGPT was released, gave many of us the impression that we have entered the really fast takeoff phase of the AI adoption, and the subsequent compounding effects wood lead to an even faster acceleration. However, months went by, and the multimodal features were not accessible in ChatGPT. Maybe the hyper acceleration was not here after all. Or maybe creating an awesome AI product at scale and affordably is still a major challenge.

Finally last week OpenAI announced that they have started rolling out multimodal capabilities to select premium users. It turned out that the rollout was still petty gradual, and the timeline of when anyone would be able to get access were unclear. Those who got access seemed to be having a blast with it, and taunted the rest of us with some really cool use cases. Many of them came in my X newsfeed. A few cool ones are shown below.

Here is how to use ChatGPT to find Waldo:

Here is helping with home decoration:

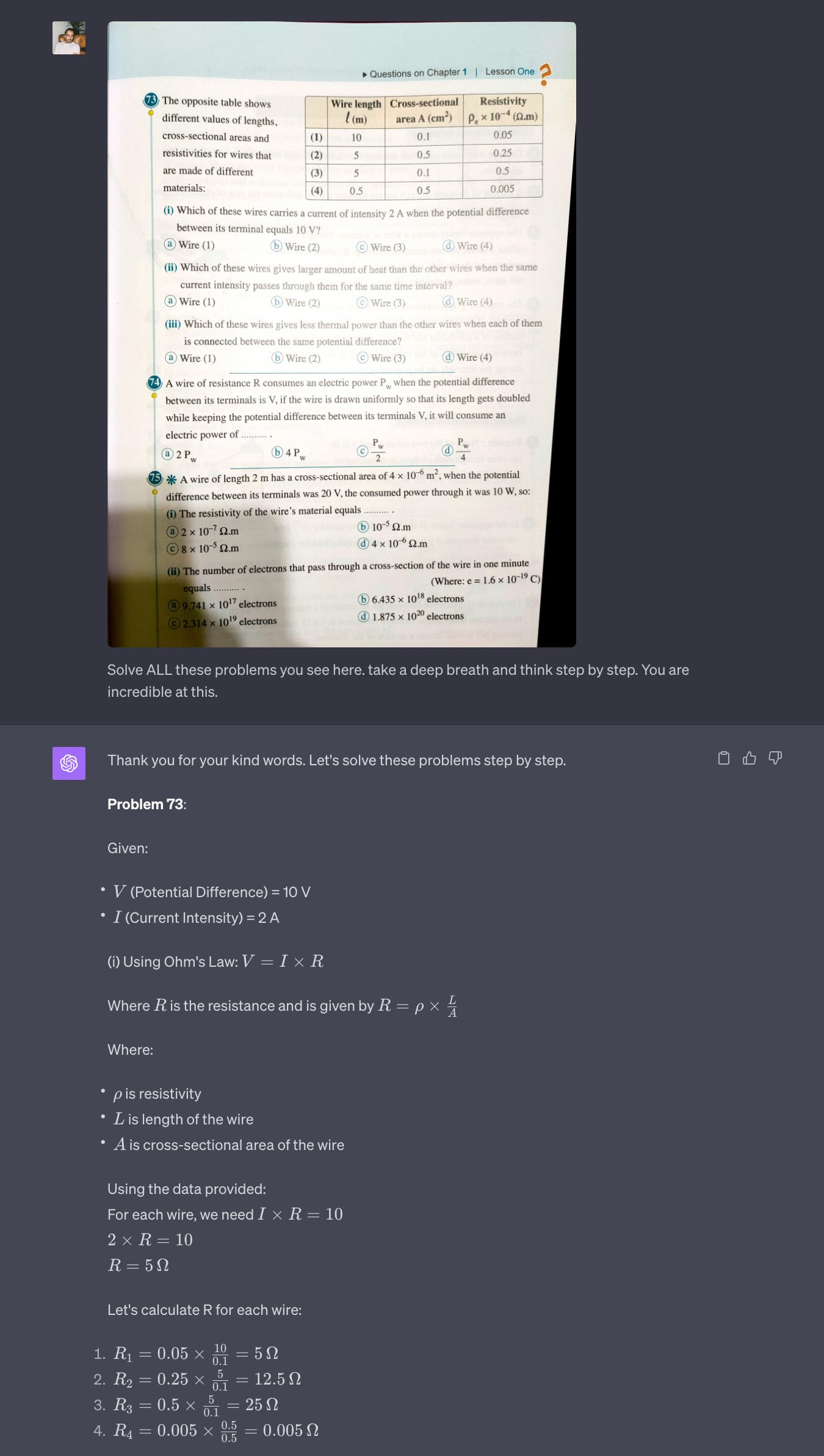

And here is ChatGPT doing homework:

All of the above examples are from Pietro Schirano BTW.

Great as using images in chat might be, a potentially even bigger game changer is the voice interaction. Some of the examples I’ve seen are just mind-blowing. Voice is even more intuitive as a user interface than text input is, and I feel like we are finally entering the age of real AI-enabled voice assistant. I’ve seen many cool examples online, but this tweet from Peter Yang might be a good example of what kind of creative opportunities this kind of technology will create:

Unfortunately, for days would be checking my ChatGPT to see if I had gotten access to this technology yet. And each time I’d come back empty handed. :’( This prompted me to post the following tweet yesterday:

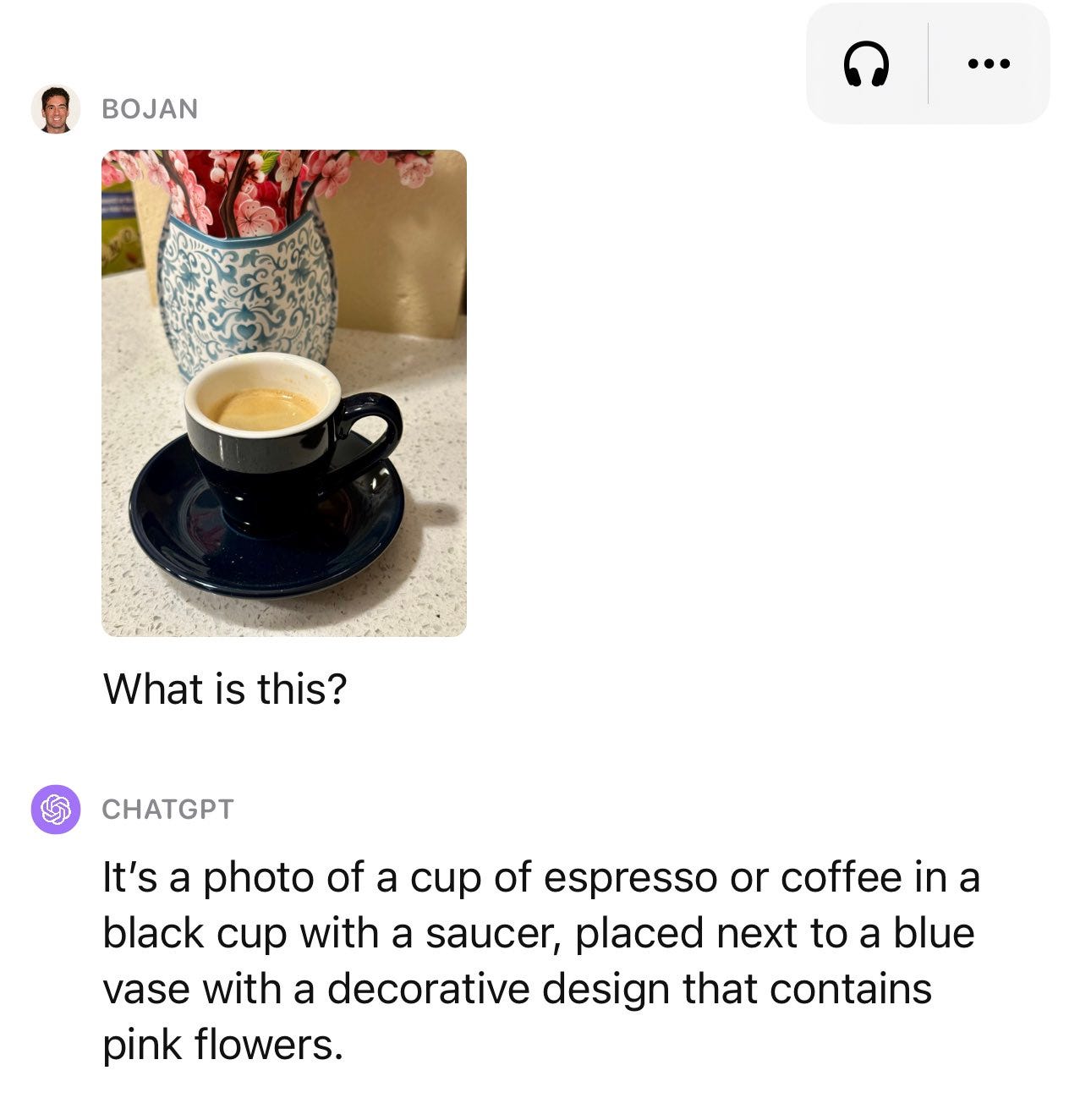

After seeing it, some good folks at OpenAI had mercy on me and gave me access! I just got access late last night, so I have really not had much chance to put it to the paces yet. However, so far it seems to be working as advertised:

However, be careful: multimodal ChatGPT seems to suffer from all the same issues that the regular ChatGPT suffers from. For instance, I gave it a couple of chess puzzles and it completely hallucinated the inaccurate responses:

I am pretty sure that these sorts of issues will be sorted out in due time. In the meanwhile I am going to play some more with it and report on what I find.

Thanks for sharing. Some cool uses of multimodal chatgpt I hadn't even considered. Can't wait to get my hands on it!

Excellent post highlighting the impact of new AI advancements. Your posts are a high signal vs. noise ratio compared to all of the other posts I’ve seen on X and other platforms.